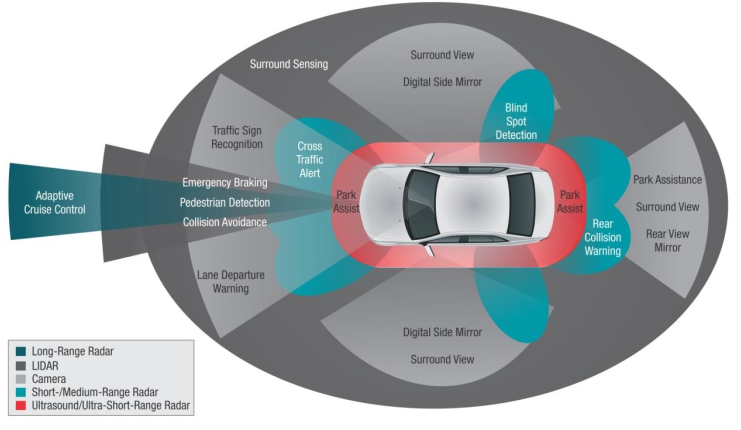

Today’s vehicles sense their road environments, such as other vehicles, pedestrians or obstacles, using local perception sensors. The capability of environmental perception is a core component of future automated driving system. Our team dedicates on the research and development of advanced vision-based detection, simultaneous localisation and mapping (SLAM) and sensory fusion algorithm and techniques.

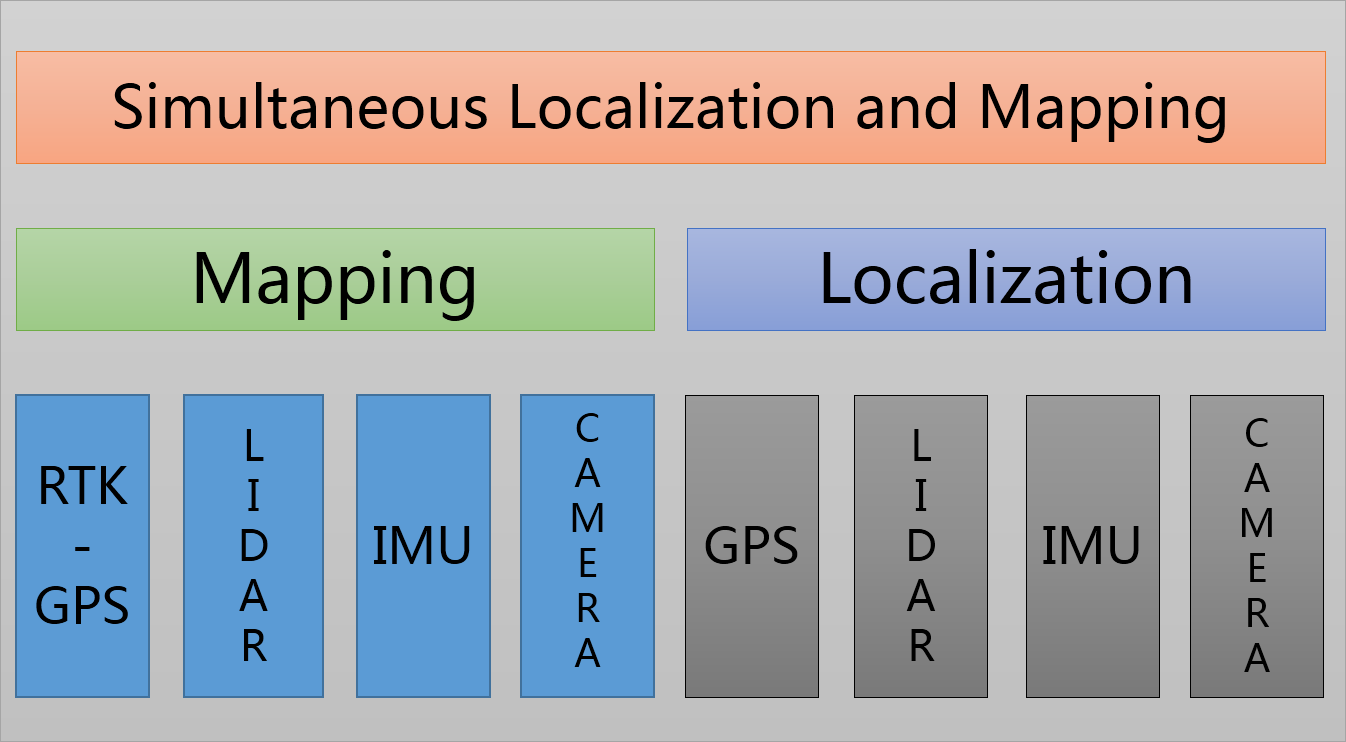

Simultaneous localization and mapping (SLAM) is the problem of continuously constructing the map of the environment being explored while simultaneously computes the vehicle’s location. Although 3D LIDAR achieves popularity in SLAM technique due to its high robustness, its high cost impairs the widely usage. One alternative approach is to perform SLAM from digital cameras by extracting the vision-based depth information.Due to the nature of electronic devices, it is common to have a certain degree of error with respect to physical phenomenon measured by the individual sensors. In our project, SLAM technique is realized based on both stereo camera and the fusion of other low-cost sensors.

Additionally, the autonomous vehicle gains improved knowledge about the global and local scenes with the collaborative usage of RTK-GPS, which achieves the localization error of less than 10 centimeters. After acquiring sufficient localization information, our further research focuses on the algorithm development of secure, efficient path planning and real-time obstacle avoidance. Our concept of autonomous driving is demonstrated through in-house developed electric vehicle with integrated LIDAR, RTK-GPS and vision sensors.

Selected Papers

Hui Huang , Huiyun Li*, Wenqi Fang and Shaobo Dang, cuiping Shao, Tianfu Sun, Data Redundancy Mitigation in V2X based Collective Perceptions, IEEE Access, Volume: 8, Page(s): 13405 – 13418, 10 January 2020, (IF= 4.098, SCI, JCR Q1)

Lutao Chu; Huiyun Li; Zhiheng Yang,Accurate Scale Estimation for Visual Tracking with Significant Deformation,IET Computer Vision,14(5),2020,(IF= 1.516, SCI, JCR Q3)

Selected Patents

发明及PCT专利:一种基于聚类和极限学习机的自动驾驶决策方法,2017/5/12,PCT/CN2017/084081

发明专利:车辆自动驾驶控制策略模型生成方法、装置、设备及介质,2018/2/27,CN201810163708.7